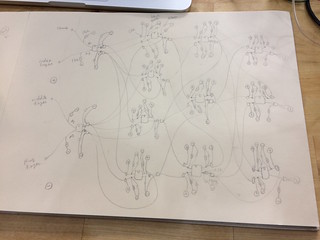

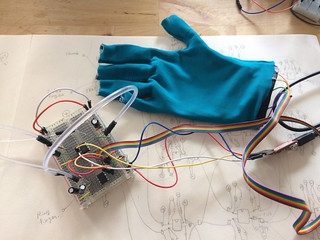

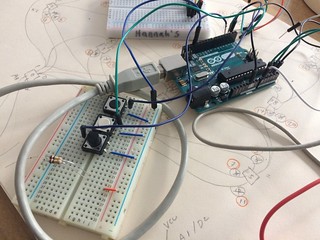

this time, I am trying out bigger network. 4 sensor input from the glove sensed by 2 attiny as input layer, 4 hidden layer1, 3 hidden layer2, and 3 output layer to recognize 3 different hand gestures.

The network is first prototyped and tested on python. here is the python code >> https://github.com/mikst/A.I.F.L./blob/master/python/ANN_class_hand_model.py

The data used in this code is taken from a glove I made, equipped with 4 textile bend sensors on fingers (thumb, index, middle, ring) and collected by me doing these gestures. The input range is 0-1023 as arduino works with 10bit for analog inputs. here are the original data >> https://github.com/mikst/A.I.F.L./blob/master/python/newGlove_Data01.txt

schematics and materials, breadboard prototypes

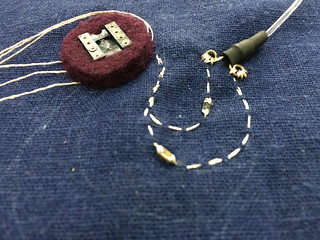

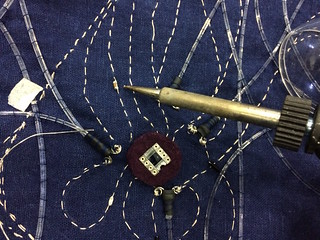

single neuron embroidery test with SMD parts.

Draping on a doll to sketch the sleeve design

Placement on the fabric. IC socket is embedded in the felt to reduce the height and connections are made with copper conductive thread (Karl Grimm thread) for further embroidery connection. back of the felt is enforced with hot glue to protect the solder joints.

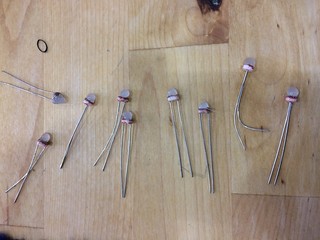

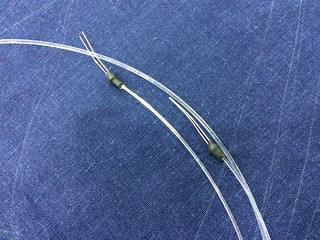

I added drop of hot glue on the LDR to create diffuse layer on it so the light gets distributed from the thin optic fiber outlet. It also helps to keep the optic fiber in place. On LED side, I needed to place more than one optic fiber on one LED. Connection between optic fibre and LED, LDR is made with black shrink tubes. It takes quite long time to make these necessary parts.

All the LED-LDR parts are tested with 55-255 PWM and listed so it can be used for calibration for each neuron later.

The process of embroidering. it is tricky to keep the fiber in right places.

The embroidery process is finished.

I had a meeting with Ben (the pattern maker) to see the possible ways to make a garment with this technique. The fabric does not drape as well as I thought due to the fiber optic’s stiffness.

necessary components (resister, capacitor) are added onto the embroidery. I chose to use SMD components and directly soldering onto the copper thread and cut the extra connection. It is finicky to work with, but manageable.

finished embroidered ANN

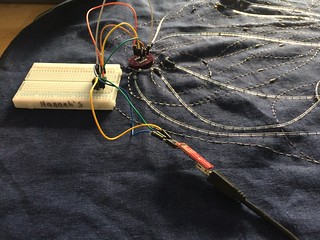

Now ATTINY85 (set as 1MHz internal clock) is programmed with USB TINY programmer. 2 of the input neurons have this code (https://github.com/mikst/A.I.F.L./tree/master/arduino/ATTINY_Neuron_inputLayer) and the other hidden/output layers are programmed with this code (https://github.com/mikst/A.I.F.L./tree/master/arduino/ATTINY_Neuron_embroidery_gloves). For hidden layer neurons, one need to change the nodeNumber to match the position/connection of the neuron in the network. I have collected the glove’s sensor input data with arduino and used it to train the Python simulator (https://github.com/mikst/A.I.F.L./blob/master/python/ANN_class_hand_model.py)

After few tweeking and debugging, it seems to work quite ok. The “scissor” gesture is not very well recognized… it only works sometimes. I am not sure if it is due to bad connection on the circuit or not well calibrated LDR or specific optic fiber that came out and not seeing the light well… But for now I am very happy with how it came out. I like the slow delay between the change of gesture and the result/light.

The problem was simply a power problem. I have all the microcontrollers connected in parallel and the last in the chain (the output layer) was lacking current. Now I added few more lines (electrical connection) of power and ground to the last in chain, and it works fine. It can recognize all 3 gestures (paper rock scissors) smoothly.